Big Data refers to extremely complex datasets that require advanced computational methods to process, analyze, and extract meaningful insights. In environmental science, Big Data enables climate modeling, pollution monitoring, conservation planning, and agricultural optimization. Environmental data scientists typically earn $65,000-$130,000 depending on specialization and experience, with the field projected to grow significantly as organizations prioritize data-driven environmental decision-making.

If you're passionate about environmental science and fascinated by technology, you're entering the field at an exciting time. Big Data has transformed how we understand and protect our planet, creating career opportunities that didn't exist a decade ago. From tracking deforestation with satellite imagery to predicting climate patterns with machine learning, environmental professionals now use data science to tackle our most pressing ecological challenges.

Here's what makes this field particularly exciting for students: environmental data science sits at the intersection of conservation, technology, and policy. Whether you're interested in protecting endangered species, developing sustainable agriculture systems, or shaping climate policy, data analysis skills will amplify your impact and career prospects.

In This Article...

- What Is Big Data in Environmental Science?

- Why Big Data Matters for Your Environmental Career

- The Evolution of Big Data: From Punch Cards to Planetary Monitoring

- How Environmental Scientists Use Big Data: Career Applications

- Skills and Educational Pathways

- Career Outlook and Salaries

- Advantages of Big Data in Environmental Science

- Current Challenges and Considerations

- Getting Started: Next Steps for Students

- Frequently Asked Questions

- Key Takeaways

What Is Big Data in Environmental Science?

Let's start with a clear definition. Big Data describes datasets so massive and complex that traditional software can't handle them effectively. We're not just talking about large spreadsheets-we're talking about petabytes (millions of gigabytes) of information collected from satellites, ocean sensors, weather stations, and wildlife tracking devices operating 24/7 across the globe.

Big Data is characterized by five key attributes that environmental scientists use to evaluate data systems:

- Volume: Environmental monitoring generates enormous quantities of data every second. Satellite imagery alone produces terabytes daily, while global climate models process petabytes of historical and real-time atmospheric data.

- Variety: Environmental data comes in multiple formats-satellite images, sensor readings, text reports, DNA sequences, and social media posts about local conditions. Modern systems can handle both structured data (organized databases) and unstructured data (images, text, video).

- Velocity: Real-time environmental monitoring requires instant data processing. Early warning systems for natural disasters, water quality monitoring, and air pollution tracking all depend on analyzing data as it's generated to provide timely alerts and responses.

- Veracity: Data accuracy is critical in environmental science. Big Data systems include verification methods to ensure data sources are trustworthy and measurements are reliable, particularly important when informing policy decisions or emergency responses.

- Value: The ultimate test-can we extract meaningful insights from the data? Big Data tools help environmental scientists identify patterns, predict outcomes, and make evidence-based recommendations that would be impossible to discover through traditional analysis.

For environmental science students, understanding these principles is increasingly essential. Employers across government agencies, research institutions, and private environmental consulting firms now expect graduates to have at least basic data analysis skills.

Why Big Data Matters for Your Environmental Career

The integration of Big Data into environmental science has created entirely new career pathways. Environmental data analysts are now in high demand at organizations ranging from the EPA to private conservation groups. These professionals combine environmental knowledge with technical skills to transform raw data into actionable conservation strategies.

Consider the skills that employers are seeking today. A recent analysis of environmental science job postings shows that positions mentioning "data analysis," "Python," or "GIS" offer salaries 25-40% higher than similar roles without these requirements. This trend reflects a fundamental shift in how environmental work is conducted-decisions are increasingly data-driven rather than based solely on field observations.

The career advantages extend beyond salary. Professionals with data science skills often find themselves working on high-impact projects: designing national climate adaptation strategies, optimizing renewable energy systems, or developing AI-powered tools for wildlife conservation. These roles offer the meaningful work that draws people to environmental science, amplified by technology's power to scale solutions.

The Evolution of Big Data: From Punch Cards to Planetary Monitoring

Understanding Big Data's history helps contextualize its current importance in environmental science. The concept emerged from a long-standing challenge: we could collect more data than we could effectively analyze.

The modern computing era began in the 1940s when researchers first recognized what they called "information explosion." By 1965, the US government established the world's first data center to store tax records and criminal fingerprint data on magnetic tapes. This was revolutionary at the time, but the real transformation came decades later with three key developments: affordable data storage, powerful computing processors, and the internet, enabling global data sharing.

A pivotal moment came in 2000 when researchers quantified just how much information humans produce. Their seminal paper "How Much Information?" revealed that each person generated approximately 250 megabytes of data annually. By 2008, just eight years later, the world's servers processed 9.57 zettabytes-that's 9.57 trillion gigabytes-demonstrating the exponential growth that made Big Data essential rather than optional.

In environmental science, the breakthrough came in 2012, when President Obama's reelection campaign successfully used Big Data analytics to inform its strategy. This validated the approach and led to the Big Data Research and Development Initiative, which funded environmental applications including climate modeling, pollution monitoring, and conservation planning. Today, NASA alone stores over 32 petabytes of climate data, enabling the sophisticated modeling that informs international climate agreements.

How Environmental Scientists Use Big Data: Career Applications

Big Data has transformed virtually every environmental science discipline. Here's how it's applied across different specializations-and what careers it creates.

Climate Science and Atmospheric Research

Climate modeling represents one of the most data-intensive applications in environmental science. Organizations like NASA, NOAA, and international research institutions process petabytes of satellite imagery, ocean temperature readings, atmospheric composition data, and historical climate records to create predictive models.

Career Opportunities: Climate modelers and atmospheric data analysts work for federal agencies (NASA, NOAA), research universities, and international climate organizations. Entry-level positions typically require a master's degree in atmospheric science, environmental science, or data science, with coursework in climate. Mid-career climate data scientists at federal agencies earn $85,000-$120,000 annually.

What You'd Do: Analyze satellite data to track atmospheric changes, develop predictive models for extreme weather events, create visualizations for policy makers, and collaborate with international research teams on climate projections.

Essential Skills: Statistical modeling, Python or R programming, familiarity with climate science principles, experience with specialized software like MATLAB, and understanding of atmospheric physics.

Conservation and Wildlife Management

Big Data enables conservation efforts at unprecedented scales. Conservation organizations now use GPS collar data, camera trap images, drone surveys, and environmental DNA sampling to monitor species populations and habitat health across entire ecosystems.

Computer vision algorithms automatically process millions of wildlife camera photos, identifying species and behaviors that would take humans years to analyze manually. Satellite imagery tracks habitat loss in near real time, allowing conservationists to respond quickly to threats such as illegal logging or encroachment.

Career Opportunities: Conservation data specialists work for organizations such as The Nature Conservancy, the World Wildlife Fund, government wildlife agencies, and environmental consulting firms. These positions combine field biology knowledge with technical analysis skills. Salaries range from $55,000 to $85,000 for mid-level positions.

What You'd Do: Design monitoring programs using GPS tracking and camera traps, analyze population trends from large datasets, create GIS maps showing habitat corridors and threats, develop machine learning models to process camera trap images, and prepare data visualizations for stakeholders and funders.

Essential Skills: GIS software proficiency, basic programming (Python for data cleaning and analysis), statistics, ecology knowledge, and data visualization tools like Tableau or R Shiny.

Environmental Health and Pollution Monitoring

The EPA and state environmental agencies use Big Data to monitor air and water quality, track pollution sources, and assess public health impacts. High-throughput screening of industrial chemicals, combined with epidemiological data and environmental sampling, provides comprehensive insights into environmental health risks.

Career Opportunities: Environmental health data analysts work for the EPA, state departments of environmental quality, public health departments, and environmental consulting firms, conducting impact assessments. These professionals typically hold master's degrees in environmental health, toxicology, or data science. Salaries range from $65,000 to $95,000.

What You'd Do: Analyze air and water quality monitoring data, identify pollution hotspots and exposure patterns, synthesize data from multiple sources to assess health risks, develop predictive models for pollution dispersion, and create public-facing dashboards showing real-time environmental conditions.

Essential Skills: Laboratory data analysis, statistical methods, environmental sampling protocols, spatial analysis with GIS, and regulatory knowledge (Clean Air Act, Clean Water Act).

Geographic Information Systems (GIS) and Spatial Analysis

GIS has always relied on data, but Big Data has transformed what's possible. GIS technicians and analysts now work with real-time satellite feeds, drone imagery, social media location data, and sensor networks to create dynamic, continuously updating maps.

During natural disasters, GIS professionals synthesize multiple data streams-satellite imagery showing damage, social media reports from affected areas, transportation data, and utility outage information-to help emergency responders prioritize resources. This same approach aids urban planning, wildfire prediction, and environmental justice research.

Career Opportunities: GIS specialists work across nearly every environmental sector-government agencies, urban planning departments, environmental consulting, utilities, and non-profits. Entry-level positions start around $50,000-$65,000, with experienced specialists earning $75,000-$100,000.

What You'd Do: Create and maintain spatial databases, analyze geographic patterns in environmental data, develop interactive maps for decision-makers, integrate data from multiple sources (satellites, drones, sensors), and program custom GIS applications using Python or JavaScript.

Essential Skills: ArcGIS or QGIS proficiency, Python programming (ArcPy library), spatial statistics, remote sensing interpretation, database management (PostgreSQL with PostGIS), and web mapping frameworks.

Agricultural Technology and Food Systems

Precision agriculture uses Big Data to optimize crop yields while minimizing environmental impact. Farmers and agricultural scientists analyze soil sensors, weather data, satellite imagery, and historical yield information to make planting, irrigation, and fertilization decisions field-by-field or even plant-by-plant.

This data-driven approach is particularly crucial for developing countries where marginal landscapes-areas with poor soil, limited water, or extreme conditions-must be used efficiently. Big Data helps identify which crops will thrive in specific microclimates and how to manage resources sustainably.

Career Opportunities: Agricultural data scientists work for agtech companies, agricultural research stations, farming cooperatives, and companies developing precision agriculture tools. The field is growing rapidly as farms adopt smart technologies. Salaries range from $70,000 to $110,000.

What You'd Do: Analyze crop performance data to optimize planting strategies, develop machine learning models predicting pest outbreaks or disease, create recommendation systems for irrigation and fertilization, process satellite and drone imagery to assess plant health, and design decision-support tools for farmers.

Essential Skills: Agricultural science knowledge, machine learning, remote sensing, IoT sensor data processing, statistical modeling, and practical understanding of farming operations.

Genomics and Biodiversity Research

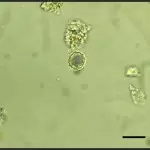

Environmental genomics generates massive datasets. Decoding the human genome took over 10 years when first completed. Today, with Big Data analytics, the same process takes about 24 hours. Environmental scientists apply these techniques to study biodiversity, track disease spread in wildlife populations, and develop climate-resilient crop varieties.

DNA barcoding projects create reference libraries of genetic sequences for all species in ecosystems. When combined with environmental DNA (eDNA) sampling-where scientists detect organisms from trace DNA in water or soil-researchers can assess biodiversity without even seeing the organisms.

Career Opportunities: Bioinformatics specialists work in conservation genetics, agricultural research, environmental consulting, and public health. While not exclusively environmental, many bioinformatics roles address environmental questions. These positions typically require advanced degrees (MS or PhD) and offer salaries of $75,000-$120,000+.

What You'd Do: Analyze genetic sequences from environmental samples, develop databases of biodiversity genetic information, use computational tools to track disease mutations in wildlife, identify species from eDNA samples, and collaborate with field biologists to interpret genetic findings.

Essential Skills: Programming (Python, R, Perl), understanding of genetics and molecular biology, familiarity with bioinformatics software and databases (GenBank, BLAST), statistical analysis, and often Linux/Unix command line skills.

Urban Environmental Planning

Smart cities use Big Data to address environmental challenges in urban areas. City planners analyze traffic patterns, air quality sensors, energy consumption data, and demographic information to reduce pollution, optimize public transportation, and improve resource efficiency.

Big Data enables cities to identify environmental justice issues-for example, by revealing that certain neighborhoods consistently experience worse air quality or lack access to green spaces. This evidence informs targeted interventions and policy changes.

Career Opportunities: Urban environmental analysts work for city planning departments, sustainability offices, transportation agencies, and consulting firms specializing in smart city technologies. Positions typically require master's degrees in urban planning, environmental science, or public policy with technical skills. Salaries range from $60,000 to $90,000.

What You'd Do: Analyze urban sensor networks monitoring air quality and noise, identify environmental health disparities across neighborhoods, model traffic flow to reduce emissions, evaluate green infrastructure effectiveness, and create data dashboards for city officials and the public.

Essential Skills: GIS, data visualization, urban planning principles, environmental science fundamentals, statistical analysis, and stakeholder communication skills to translate technical findings for policymakers.

Emerging Applications: AI and Machine Learning

The newest frontier combines Big Data with artificial intelligence. Machine learning models now predict wildfire spread with unprecedented accuracy, identify illegal fishing vessels from satellite imagery, optimize renewable energy generation based on weather forecasts, and detect subtle ecosystem changes that humans might miss.

Career Opportunities: Environmental data scientists specializing in AI/machine learning work for tech companies with sustainability initiatives, research institutions, and innovative environmental organizations. These cutting-edge positions often require advanced degrees and offer salaries of $90,000-$140,000+.

What You'd Do: Develop machine learning models for environmental predictions, train neural networks on satellite imagery to detect changes, create AI-powered early warning systems for environmental hazards, and research novel applications of AI to conservation and sustainability challenges.

Essential Skills: Advanced programming (Python with TensorFlow, PyTorch), machine learning theory and practice, environmental domain knowledge, big data frameworks (Spark, Hadoop), and cloud computing platforms (AWS, Google Cloud, Azure).

Skills and Educational Pathways

If these career opportunities excite you, here's how to build the skills and credentials employers are seeking.

Undergraduate Preparation

Most environmental data science careers require at least a bachelor's degree. You have several approaches:

Environmental Science Major with Data Emphasis: Major in environmental science, ecology, or a related field while taking additional courses in statistics, programming, and data analysis. This path gives you deep environmental knowledge with technical skills layered on top.

Double Major or Major/Minor Combination: Combine environmental science with computer science, mathematics, or statistics. This approach requires careful planning but creates a strong technical foundation.

Data Science Major with Environmental Focus: Some students major in data science or data analytics while taking environmental science courses as electives. This works well if you're confident in your technical abilities and can learn environmental concepts through coursework and internships.

Essential Undergraduate Courses:

- Statistics and Probability: Foundation for all data analysis

- Programming (Python or R): The core technical skill for data science

- Database Management: Understanding how data is stored and queried

- GIS: Spatial analysis is fundamental to environmental work

- Environmental Science Core: Ecology, environmental chemistry, earth systems

- Data Visualization: Communicating findings effectively

Graduate Programs

Many environmental data science positions prefer or require a master's degree. You have options:

Master's in Environmental Science with Data Specialization: Programs increasingly offer concentrations or certificates in environmental data science, allowing you to maintain an environmental focus while building advanced technical skills.

Master's in Data Science with Environmental Applications: Some data science master's programs allow you to focus your capstone projects and electives on environmental topics. This creates a strong technical foundation.

Specialized Programs: A growing number of universities offer dedicated "Environmental Data Science" or "Geospatial Analytics" master's degrees specifically designed for this career path.

MBA with Sustainability Focus: For those interested in corporate sustainability and ESG (Environmental, Social, Governance) analytics, combining data skills with business education creates unique opportunities.

Essential Technical Skills to Develop

| Skill Category | Specific Skills | How to Learn |

|---|---|---|

| Programming Languages | Python (pandas, NumPy, scikit-learn), R (tidyverse, ggplot2), SQL for databases | University courses, online platforms (Coursera, DataCamp), and practice with environmental datasets |

| Statistical Analysis | Hypothesis testing, regression analysis, time series analysis, and experimental design | Statistics courses, applied coursework in research methods, and real research projects |

| GIS and Spatial Analysis | ArcGIS or QGIS, remote sensing, spatial statistics, cartography principles | GIS courses, ESRI certifications, hands-on projects with real spatial data |

| Machine Learning | Supervised/unsupervised learning, random forests, neural networks, model validation | Advanced coursework, online specializations, and Kaggle competitions with environmental data |

| Data Visualization | Matplotlib, ggplot2, Tableau, Power BI, D3.js for interactive web visualizations | Specialized courses, practice creating visualizations for different audiences |

| Cloud Computing | AWS, Google Cloud Platform, Azure-basics of cloud storage and computing | Cloud provider tutorials, internships using cloud platforms, certifications |

Building Your Portfolio

Employers want to see that you can apply these skills to real environmental problems. Build a portfolio including:

- Personal Projects: Analyze publicly available environmental data (EPA air quality, NOAA climate data, wildlife observation databases) and share your findings on GitHub or a personal website.

- Internships: Summer research positions, government agency internships, or work with environmental organizations provide hands-on experience and professional connections.

- Research Assistantships: If pursuing graduate study, research assistant positions offer paid opportunities to work on real environmental data science projects.

- Capstone Projects: Choose environmental topics for your data science projects and coursework when possible, building a portfolio that demonstrates domain expertise.

- Competitions and Hackathons: Environmental data science competitions (often hosted by organizations such as the World Bank or conservation groups) offer real-world problems and deadlines.

Certifications That Help

While not always required, certain certifications demonstrate competency:

- GISP (GIS Professional): Industry-recognized credential for GIS expertise

- AWS or Google Cloud Certifications: Demonstrate cloud computing skills

- SAS or Tableau Certifications: Specific software competencies valued by some employers

- Microsoft Azure Data Scientist Associate: For roles involving Microsoft's data platform

Career Outlook and Salaries

The job market for environmental professionals with data science skills is strong and growing. Let's look at the numbers:

Salary Ranges by Position

| Position | Entry Level (0-2 years) | Mid-Career (3-7 years) | Senior (8+ years) |

|---|---|---|---|

| Environmental Data Analyst | $55,000 - $70,000 | $70,000 - $90,000 | $90,000 - $115,000 |

| GIS Specialist | $50,000 - $65,000 | $65,000 - $85,000 | $85,000 - $105,000 |

| Environmental Data Scientist | $75,000 - $95,000 | $95,000 - $125,000 | $125,000 - $160,000+ |

| Conservation Data Specialist | $50,000 - $65,000 | $65,000 - $85,000 | $85,000 - $110,000 |

| Climate Data Analyst | $65,000 - $80,000 | $80,000 - $110,000 | $110,000 - $140,000 |

| Urban Environmental Analyst | $55,000 - $70,000 | $70,000 - $90,000 | $90,000 - $120,000 |

Salaries vary significantly by location (coastal cities and tech hubs pay more), employer type (federal government and private tech companies typically offer higher salaries than non-profits), and specific skill set (machine learning expertise commands premium compensation).

Job Growth Projections

The Bureau of Labor Statistics projects strong growth for related occupations through 2031:

- Data Scientists: 36% growth (much faster than average)

- Environmental Scientists and Specialists: 6% growth (as fast as average)

- Statisticians: 33% growth (much faster than average)

- Geographers and GIS Specialists: 5% growth (as fast as average)

The intersection of these fields-environmental science plus data science-creates even stronger opportunities. Many environmental organizations report difficulty finding qualified candidates with both environmental knowledge and technical data skills, suggesting demand exceeds supply.

Where Environmental Data Scientists Work

- Federal Agencies: EPA, NOAA, NASA, USGS, Department of Energy, National Park Service

- State and Local Government: Environmental quality departments, public health agencies, urban planning offices

- Research Institutions: Universities, national laboratories, climate research centers

- Non-Profit Conservation: The Nature Conservancy, World Wildlife Fund, Conservation International, regional land trusts

- Environmental Consulting: Firms conducting impact assessments, sustainability audits, and compliance monitoring

- Technology Companies: Google, Microsoft, Amazon, and startups working on climate tech and sustainability

- Agriculture and Food: Agtech companies, agricultural research stations, food supply chain companies

- Energy Sector: Renewable energy companies, utilities, energy efficiency firms

Advantages of Big Data in Environmental Science

Big Data has fundamentally improved how environmental science is practiced:

Faster Analysis and Decision-Making

Computing power that once required months now takes hours or minutes. Climate models that inform international agreements, pollution tracking that protects public health, and conservation monitoring that prevents extinctions all move faster thanks to Big Data analytics. This speed matters when responding to oil spills, tracking disease outbreaks in wildlife, or predicting extreme weather events.

Error Reduction Through Scale

Larger datasets naturally reduce the impact of anomalies and measurement errors. When you're analyzing millions of data points rather than hundreds, outliers and noise have less influence on overall conclusions. This statistical advantage makes findings more reliable and gives decision-makers greater confidence in recommendations.

Pattern Recognition Impossible for Humans

Machine learning algorithms identify subtle patterns in environmental data that humans would never notice. For example, AI models discovered that certain combinations of ocean temperature, atmospheric pressure, and wind patterns precede coral bleaching events-enabling early warning systems that help protect reefs.

Integration Across Disciplines

Big Data allows researchers to combine disparate information sources. Urban planners can now analyze traffic data, air quality sensors, public health records, and socioeconomic information simultaneously, revealing connections between transportation infrastructure and environmental health disparities. This holistic approach produces more effective solutions.

Resource Optimization

Environmental work often operates under tight budgets. Big Data helps organizations use limited resources more effectively. Conservation groups use predictive models to prioritize which lands to protect. Cities use sensor data to optimize operations at water treatment plants. Agricultural extension services use satellite data to advise farmers without visiting every field.

Current Challenges and Considerations

Despite its transformative potential, Big Data in environmental science faces important challenges that upcoming professionals should understand:

Technical Infrastructure Requirements

Working with Big Data requires significant computing resources-powerful processors, massive storage capacity, and often cloud computing platforms. Smaller environmental organizations may lack the budget for these tools, creating a gap between large research institutions with resources and community-based groups doing critical local work. This digital divide could concentrate expertise and decision-making power in well-funded organizations.

Skill Gaps and Training Needs

Environmental professionals trained before the data science revolution often lack technical skills, while data scientists may lack domain knowledge in the environment. Bridging this gap requires ongoing professional development and, for students, carefully designed interdisciplinary education. The field needs people who can speak both languages fluently, understanding ecology and statistics, conservation and coding.

Data Quality and Standardization

Environmental data comes from countless sources using different methods, units, and quality control procedures. Combining a city's air quality sensors with federal monitoring stations and satellite observations requires careful harmonization. Poor data quality can lead to incorrect conclusions that undermine conservation efforts or policy decisions. Environmental data analysts spend significant time on data cleaning and validation-less glamorous than modeling but absolutely essential.

Privacy and Ethical Concerns

Some environmental data involves human populations-health records linked to pollution exposure, demographic information for environmental justice analyses, or location data from citizen science apps. Protecting individual privacy while conducting important research requires careful protocol design and strict data governance. Students entering this field should understand data ethics and relevant regulations, such as HIPAA.

Access and Equity

Not all environmental data is publicly available. Private companies, research institutions, and government agencies sometimes restrict access to valuable datasets. The open science movement advocates for making environmental data freely accessible, arguing that climate and environmental challenges require collaborative solutions. However, concerns about commercial use, data misuse, or security sometimes justify restrictions. Finding the right balance remains an ongoing debate.

Interpretation Challenges

Big Data can identify correlations (things that occur together) but doesn't automatically reveal causation (one thing causing another). Environmental systems are complex, and spurious correlations are common. For example, ice cream sales correlate with drowning deaths, but ice cream doesn't cause drowning-both increase in summer. Environmental data scientists must combine statistical analysis with ecological understanding to draw valid conclusions.

Getting Started: Next Steps for Students

If environmental data science interests you, here's how to begin building your path:

As an Undergraduate:

- Take introductory statistics and programming courses early-they're foundational for everything else

- Seek research assistant positions with professors working on data-intensive environmental projects

- Complete summer internships that combine environmental work with data analysis

- Join student organizations related to both environmental science and data science/technology

- Build a portfolio by analyzing public environmental datasets and sharing your work on GitHub or a personal website

Exploring Graduate Programs:

- Research programs with faculty working at the intersection of environmental science and data science

- Look for assistantship opportunities that provide funding while building experience

- Consider whether you want environmental science with data specialization or data science with environmental applications

- Attend virtual or in-person information sessions to assess program culture and resources

- Connect with current graduate students to understand their experiences

Building Technical Skills:

- Start with Python or R-choose one and become proficient before learning others

- Learn GIS through university courses or ESRI's online training resources

- Practice with real environmental datasets available from EPA, NOAA, NASA, and conservation organizations

- Take online courses in data science fundamentals (Coursera, edX, and DataCamp offer excellent options)

- Participate in data analysis competitions or hackathons focused on environmental challenges

Gaining Experience:

- Apply for environmental science internships that mention data analysis in their descriptions

- Volunteer with local environmental organizations to help with data management or analysis projects

- Assist professors with data analysis for their research in exchange for learning opportunities

- Attend conferences like the Ecological Society of America or the American Geophysical Union, where data science methods are discussed

Frequently Asked Questions

Do I need a computer science degree to work with Big Data in environmental science?

No, you don't need a computer science degree. Most environmental data science positions prioritize environmental knowledge combined with data analysis skills over pure computer science expertise. An environmental science degree with strong coursework in statistics, programming, and data analysis is often the ideal background. Many professionals enter the field with bachelor's degrees in environmental science and then develop technical skills through graduate programs, certifications, or on-the-job training.

Which is more important: environmental knowledge or technical skills?

Both are essential, but the ideal balance depends on the specific role. For research positions at environmental agencies or conservation organizations, deep environmental knowledge combined with solid data skills typically works best. For positions at tech companies working on environmental problems, strong technical skills with basic environmental literacy may be sufficient. The most successful professionals develop competency in both areas-you need to understand the science to ask the right questions and the technical methods to answer them.

What programming language should I learn first?

Python is generally the best starting point for environmental data science. It's widely used across environmental research, has excellent libraries for data analysis (pandas, NumPy) and visualization (matplotlib, seaborn), and is beginner-friendly. R is also popular, especially in ecological research and statistics. Rather than trying to learn both simultaneously, become proficient in one first. Most environmental data science jobs list Python as a requirement or strong preference.

Can I work remotely in environmental data science?

Many environmental data science positions offer remote or hybrid work options, especially since the pandemic accelerated remote work adoption. Data analysis can often be done from anywhere with a reliable internet connection. However, some positions-particularly those involving fieldwork, laboratory work, or regular meetings with stakeholders-require more in-person time. Government positions are increasingly offering flexibility, while conservation organizations and consulting firms vary in their policies.

Is machine learning necessary for environmental data science careers?

Not all environmental data science positions require machine learning expertise, especially at entry levels. Many roles focus on traditional statistical analysis, data visualization, and GIS. However, machine learning skills are increasingly valuable and can significantly expand your career options and earning potential. You can start with solid foundations in statistics and programming, then add machine learning skills as you advance in your career. Advanced positions and research roles often do require machine learning knowledge.

How competitive is the environmental data science job market?

The job market is currently favorable for candidates with both environmental knowledge and data skills. Many organizations report difficulty finding qualified applicants who combine these competencies. While competition exists for prestigious positions at places like NASA or top conservation organizations, the overall demand exceeds supply. The key is developing a portfolio demonstrating real skills-internships, projects, and coursework that show you can apply data science to environmental problems.

What's the difference between an environmental data analyst and an environmental data scientist?

The distinction is somewhat fluid, but generally, data analysts focus on examining existing data, creating reports, and answering specific questions using established methods. Data scientists develop new analytical approaches, build predictive models, and often work with more complex machine learning techniques. Data scientist positions typically require more advanced education (master's or PhD) and offer higher salaries. Many professionals start as analysts and transition to scientist roles as they gain experience and skills.

Key Takeaways

- Growing Career Field: Environmental data science combines conservation work with cutting-edge technology, creating careers that didn't exist a decade ago. Positions range from GIS specialists ($50,000-$105,000) to senior environmental data scientists ($125,000+), with demand consistently exceeding supply for qualified candidates.

- Multiple Educational Pathways: You can enter this field through environmental science programs with data coursework, data science programs with environmental focus, or interdisciplinary programs specifically designed for environmental data science. The key is developing competency in both environmental science and data analysis rather than perfecting one area.

- Essential Technical Skills: Python or R programming, statistics, GIS, and data visualization form the foundation. Advanced positions increasingly require machine learning knowledge and experience with cloud computing platforms. Build these skills progressively through coursework, personal projects, and internships.

- Real-World Impact: Big Data enables environmental work impossible a generation ago-real-time pollution monitoring protecting public health, AI-powered conservation preventing extinctions, climate models informing international policy, and precision agriculture feeding growing populations sustainably. Your analytical work directly contributes to solving critical environmental challenges.

- Diverse Career Options: Environmental data science skills open doors across federal agencies (EPA, NOAA, NASA), state government, research universities, conservation organizations, environmental consulting firms, tech companies with sustainability initiatives, and the growing agtech sector. The breadth of options allows you to align your career with your specific environmental interests.

Ready to launch your career in environmental data science? Explore degree programs that combine environmental science expertise with data analysis skills, preparing you for careers at the forefront of conservation technology.

2024 US Bureau of Labor Statistics salary and job growth figures for Environmental Scientists and Specialists and Data Scientists reflect national data, not school-specific information. Salary ranges for environmental data science positions are compiled from BLS occupational data, industry salary surveys, and environmental sector job postings. Conditions in your area may vary. Data accessed January 2026.

Sources

- https://www.nature.com/articles/455001a

- https://www.linkedin.com/pulse/20140306073407-64875646-big-data-the-5-vs-everyone-must-know/

- http://highscalability.com/blog/2012/9/11/how-big-is-a-petabyte-exabyte-zettabyte-or-a-yottabyte.html

- https://www.coursera.org/learn/big-data-introduction/lecture/IIsZJ/characteristics-of-big-data-velocity

- https://www.sciencedirect.com/science/article/pii/S1364815216304194

- https://www.weforum.org/agenda/2015/02/a-brief-history-of-big-data-everyone-should-read/

- https://www.maajournal.com/index.php/maa/issue/view/59

- https://www.census.gov/about/history.html

- https://languages.oup.com/wp-content/uploads/oxford-languages-words-of-an-unprecedented-year-2020.pdf

- https://www.scientificamerican.com/blog/guest-blog/how-alan-turing-invented-the-computer-age/

- https://www.census.gov/library/working-papers/2011/dir/kraus-01.html

- https://dl.acm.org/citation.cfm?id=363790.363813&coll=DL&dl=GUIDE

- https://www.forbes.com/sites/gilpress/2013/05/09/a-very-short-history-of-big-data/#79c899d165a1

- https://hbr.org/2012/11/2012-the-first-big-data-electi

- https://obamawhitehouse.archives.gov/blog/2012/03/29/big-data-big-deal

- https://www.sciencedirect.com/science/article/pii/S0160412025005410

- https://www.ukri.org/wp-content/uploads/2022/11/BBSRC-181122-ReviewTechnologyDevelopmentBiosciences.pdf

- https://sa.catapult.org.uk/facilities/

- https://archive.reading.ac.uk/news-events/2014/October/pr604426.html

- https://lighthillrisknetwork.org/

- https://foodsystems.org/wp-content/uploads/2020/12/2017_WorldBank_Chapter_15.pdf

- https://onlinelibrary.wiley.com/doi/abs/10.1111/gcbb.12078

- https://www.wur.nl/en/news/breakthrough-fight-against-devastating-banana-diseases-resistant-plant-developed

- https://www.oecd.org/en/topics/data-flows-and-governance.html

- https://jcom.sissa.it/sites/default/files/documents/JCOM_1602_2017_C05.pdf

- https://www.nesta.org.uk/feature/digital-social-innovation/citizen-science/

- https://unpacked.media/israeli-agricultural-innovations-will-keep-the-world-fed/

- https://www.nasa.gov/centers/goddard/news/releases/2010/10-051.html

- https://www.universiteitleiden.nl/en/news/2017/01/from-scarcity-to-abundance-big-data-in-archaeology

- https://www.academia.edu/14362660/Think_big_about_data_Archaeology_and_the_Big_Data_challenge

- https://www.smithschool.ox.ac.uk/sites/default/files/2022-03/Big-data-and-Environmental-Sustainability_0.pdf

- https://www.sciencedirect.com/science/article/pii/S2226585615000217

- https://www.sciencedirect.com/science/article/abs/pii/S0167739X17308993

- https://www.pnas.org/doi/10.1073/pnas.1614023113

- https://datafloq.com/read/the-power-of-real-time-big-data/225

- https://ico.org.uk/media2/migrated/2013559/big-data-ai-ml-and-data-protection.pdf

- What Is Parasitology? The Science of Parasites Explained - November 19, 2018

- Desert Ecosystems: Types, Ecology, and Global Importance - November 19, 2018

- Conservation: History and Future - September 14, 2018

Related Articles

Featured Article

State Environmental Science Scholarships: Complete 50-State Guide