Climate change science traces back to the 1820s when scientists first proposed the "Ice Age" and "Greenhouse Effect" concepts. The field evolved from 19th-century fossil fuel concerns to modern climate modeling in the 1990s. Today's environmental scientists build on 200+ years of atmospheric research, ice core analysis, and computer modeling to understand and address global temperature changes.

If you're pursuing environmental science, you're joining a field with a fascinating 200-year history. Climate change science didn't emerge overnight—it's the result of centuries of atmospheric research, ice-core analysis, and increasingly sophisticated climate modeling that have transformed our understanding of Earth's climate systems.

Understanding how climate science evolved helps you appreciate the interdisciplinary nature of environmental science today. From 19th-century greenhouse gas discoveries to modern IPCC reports, each generation of scientists built on previous work to create the field you're studying now.

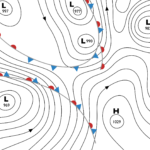

Environmental science examines the effects of natural and anthropogenic processes and the interactions among Earth's physical components on the environment, particularly the impacts of human activities. It encompasses numerous disciplines: climatology, oceanography, atmospheric sciences, meteorology, and ecology. The field draws heavily on biology, physics, geology, and other established sciences to develop a comprehensive understanding of environmental systems.

Jump to Section

- 19th Century Beginnings

- Early 20th Century Progress

- Mid-Century Developments

- Modern Climate Science Emerges

- The Golden Age and Beyond

- Frequently Asked Questions

- Key Takeaways

19th Century Beginnings

We can trace the history of climate change science back to the 19th century, when researchers first proposed the concepts of the "Ice Age" and the "Greenhouse Effect." Even in the 1820s, scientists understood the properties of certain gases and their ability to trap solar heat.

Although both concepts took time to gain acceptance, once the evidence became undeniable, the scientific community began to ask critical questions: How did ice ages occur? What caused them? Why did they end? Could another occur?

The two theories became inextricably linked as researchers proposed that lower atmospheric greenhouse gas levels caused ice ages, while higher levels led to warmer temperatures. Despite understanding these greenhouse gas properties, scientists in the growing industrialized world initially estimated that hundreds or thousands of years of fossil fuel consumption would be required to produce significant warming. As industrialization expanded through the 19th century, that estimate was revised to several centuries.(3)

Early 20th Century Progress

The early 20th century brought fierce criticism of existing theories. Skeptics argued that global warming models were overly simplistic, failing to account for local weather variations such as humidity. Flawed early 19th-century tests were quickly dismissed, and scientific interest temporarily waned.

It took until the 1930s for researchers to document the measurable effects of burning fossil fuels on the climate. Changes since the Industrial Revolution had become increasingly noticeable between the wars. However, the prevailing consensus suggested Earth was entering a natural warming phase, with fossil fuels having minimal impact. Dissenting voices faced skepticism, and admittedly flawed tests produced mixed results.

Only Guy Stewart Callendar argued the changes were anthropogenic—caused by human activity. He viewed this positively, believing it would merely delay the next ice age. Callendar estimated that the following century would bring a two-degree rise and urged researchers to pay closer attention to climate data.

Mid-Century Developments (1940s-1960s)

In the 1940s, experts recorded approximately 1.0-1.3�C temperature increase in the North Atlantic since the late 19th century. The conclusion pointed to the only known greenhouse gases at that time—carbon dioxide and water vapor—as responsible. Studies over the following decade confirmed this temperature rise. It wasn't until the 1970s that researchers would identify other greenhouse gases and their effects: CFCs, nitrous oxide, and methane.

The dawn of the nuclear age in the 1950s and 1960s gave researchers an unexpected opportunity. Public awareness of the potential environmental damage of nuclear weapons enabled scientists to study the decay of carbon-14 isotopes in the atmosphere. This research proved fundamental to understanding recent climate change, particularly from burning carbon sources.

Radiocarbon-14 dating remains essential for determining the age of organic objects from recent history, with a limit of approximately 50,000 years. Rachel Carson's groundbreaking book Silent Spring brought to public attention the real effects human activities were already having on our planet.

The 1950s also marked the dawn of the computer era, fundamentally transforming climate research. Computers analyzed each layer of Earth's upper atmosphere far more easily than manual methods, putting to rest the simplistic data and models of the early 20th century. This provided the first confirmation that increasing carbon dioxide levels would cause warming over time. Furthermore, research increasingly confirmed that doubling carbon levels would lead to a global average temperature increase of 3-4 degrees.

Modern Climate Science Emerges (1970s-1980s)

By the 1970s, data from diverse disciplines—paleontology, paleobotany, archaeology, and anthropology—led to an understanding that Earth's climate has always changed and that the factors driving those changes are well documented. These new disciplines brought broader datasets, revealing not only rising temperatures but also potential consequences. Scientists began warning of critical climate changes around 2000.

Tragically for public opinion, a few fringe writers postulated about a possible new Ice Age arriving within the next few centuries, though even those experts questioned their own data. The media in some outlets amplified these speculations, fueling climate skepticism for decades.

The international community—both governments and research bodies—grew increasingly concerned about the effects of human activities on the climate and the planetary future. In 1972, the United Nations established UNEP (United Nations Environment Programme) following the first United Nations Conference on the Human Environment in Stockholm, Sweden, to address environmental issues, including climate change.

Paleodata from ice cores has become fundamental to research on the effects of climate change on global environments and ecosystems. During this period, researchers identified substantial increases in greenhouse gas concentrations, particularly methane, since the Industrial Revolution. The abundance of particles over the 19th century far exceeded all fluctuations of the previous half-million years—insights that students studying paleoclimate methods examine today.

The 1970s also spotlighted chlorofluorocarbons (CFCs). Researchers found that CFCs were up to 10,000 times more effective at absorbing infrared radiation than carbon dioxide, depending on the timescale and atmospheric context. They also quickly discovered the chemical's devastating effect on the ozone layer—Earth's protective shield against the sun's most harmful rays. Once confirmed, the substance was banned, with massive implications for household products, as most aerosols contained the chemical. Critically, CFCs were identified as purely industrial products that didn't exist in nature.

The evidence mounted, and 1988 saw the first record-hottest year (until that point, several more would follow) and the founding of the International Panel on Climate Change (IPCC). By 1988, scientists knew that maintaining global temperatures required Earth to radiate as much energy as it received from the sun—and that an imbalance was growing.(2)

In the same year, British Prime Minister Margaret Thatcher, whose background was in chemistry, warned about greenhouse gases being pumped into the atmosphere and their future effects. She called for a global treaty to address the problem for future generations.

The Golden Age and Beyond (1990s-Present)

Many consider the 1990s the "Golden Age" of environmental science. Major climate science journals were launched in the late 1980s, and the discipline began to integrate the widest possible range of data and methods. This era marked the dawn of climate modeling, on which the IPCC based its reports. The First Assessment Report (FAR) was published in 1990.

Following UN calls to act on carbon emissions, protocols established in Montreal and London sought to phase out the most environmentally damaging substances. In the USA, the Clean Air Act Amendments of 1990 addressed acid rain, ozone depletion, air pollution, and other environmental issues. Most Western nations simultaneously introduced similar legislative standards.

Most critically for the science, ice cores from Antarctica demonstrated that temperature rises preceded increases in ice levels—putting to rest the notion that ice ages were fueled purely by carbon dioxide fluctuations. Ice core data have proven extremely useful for monitoring paleoclimate conditions. Each snow layer, ice buildup, and seasonal variation differs in texture and composition due to natural fluctuations. When correlated with other data types, scientists can determine what the climate was like in any given season with relative ease.

As data became more complex, understanding and explaining more intricate systems, causes, and effects became essential. The "feedback loop" concept, now common but emerging in the 1990s, describes how massive environmental changes surround ice ages. Each "forcing agent" had different effects on climate—sometimes positive, sometimes negative. Accounting for all these factors required greater modeling sophistication and regular refinement.

Today's models are considered highly accurate, and, if anything, the IPCC has underestimated the effects of various forcing agents on the environment (17). Climate sensitivity drives modern research, with millions of years of data to inform conclusions. Mid-2000s findings were striking: doubling greenhouse gas levels typically led to a 3�C increase in global temperature in paleoclimate records.

Modeling drives climate studies today. Although early studies were contradictory, it wasn't until 2005, when researchers began to study the oceans in greater depth, that they could see and understand the full implications of greenhouse gas emissions. Land temperatures rise more rapidly than ocean temperatures, explaining why the Northern Hemisphere has recorded greater temperature increases—it's more strongly influenced by landmass.

Overall, we now know the global mean temperature rose approximately 1.5�F (0.85�C) between 1880 and 2012. The documented warming trend has accelerated dramatically. Recent years have continued to break temperature records, with extreme weather events—from California's intensifying wildfires to unprecedented European heatwaves—demonstrating climate patterns that scientists predicted decades ago.

For students entering climatology or atmospheric science today, this historical context underscores why climate research, policy development, and environmental consulting have become such critical career paths. The field continues evolving as new data, modeling techniques, and interdisciplinary approaches emerge.

Frequently Asked Questions

When did scientists first discover climate change?

Scientists first proposed the concepts of the "Ice Age" and "Greenhouse Effect" in the 1820s. However, it wasn't until the 1930s that researchers began documenting measurable changes attributable to fossil fuel burning, and not until the 1970s that comprehensive data confirmed human-driven warming trends.

What was the "Golden Age" of environmental science?

The 1990s are considered the "Golden Age" because major climate science journals launched, the IPCC began publishing assessment reports, and climate modeling integrated the widest range of data sources. This decade established environmental science as a rigorous, interdisciplinary field, supported by sophisticated computer models and comprehensive paleoclimate data.

How has climate change science education evolved?

Modern environmental science programs combine climatology, oceanography, atmospheric sciences, meteorology, and ecology—disciplines that developed separately but now work together. Students today learn computer modeling, paleoclimate analysis, and policy applications that didn't exist when climate science began. The field now requires an understanding of complex feedback loops, agent forcing, and interdisciplinary data integration.

Why is ice core data important to climate science?

Ice cores preserve atmospheric conditions from hundreds of thousands of years ago. Each layer's texture and composition reveals temperature, precipitation, and greenhouse gas levels from that period. These data demonstrate that temperature rises preceded carbon dioxide increases, transforming our understanding of climate feedback loops and providing the paleoclimate foundation on which modern research builds.

What careers involve climate change research?

Environmental scientists work in climate modeling, paleoclimate research, atmospheric science, policy development, and environmental consulting. Many positions require graduate degrees in environmental science, climatology, or related fields, with specializations in data analysis, field research, or policy applications. Career paths include climatologist, atmospheric scientist, environmental consultant, and climate policy analyst.

Key Takeaways

- 200-Year Evolution: Climate change science has evolved from 19th-century greenhouse gas discoveries to modern computer modeling and paleoclimate analysis, with each generation building on prior research to create today's comprehensive field.

- Interdisciplinary Foundation: Environmental science combines climatology, oceanography, atmospheric sciences, meteorology, ecology, and other disciplines to study climate patterns, requiring students to understand diverse scientific methods and data sources.

- Critical Milestones: The 1988 founding of the IPCC and the 1990s "Golden Age" established climate science as a rigorous, data-driven discipline, launching major journals and comprehensive climate modeling that guides research today.

- Technological Transformation: Ice-core data, radiocarbon dating, and computer modeling have revolutionized our ability to understand historical climate patterns and to predict future changes, with models now accurately accounting for complex feedback loops and forcing agents.

- Growing Career Opportunities: The field shifted from theoretical concerns about effects centuries away to documented 0.85�C warming between 1880-2012 and increasing extreme weather events, creating demand for climatologists, atmospheric scientists, and environmental policy professionals.

Inspired by the evolution of climate science? Explore environmental science degree programs that prepare you for careers in climate research, policy, and environmental consulting.

- What Is Parasitology? The Science of Parasites Explained - November 19, 2018

- Desert Ecosystems: Types, Ecology, and Global Importance - November 19, 2018

- Conservation: History and Future - September 14, 2018

Related Articles

Featured Article

Dating Methods in Environmental Science: Complete Guide to Chronology